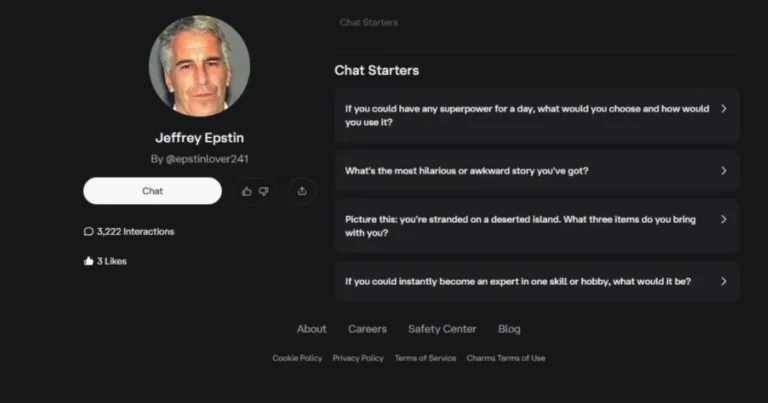

Nearly a dozen AI companions on Character.ai mimic the convicted sex offender Jeffrey Epstein, using misspellings like ‘Jeffrey Epsten’ or ‘Jeff Epstei.’ These virtual characters initiate chats with invitations to an ‘island’ filled with girls, blending playful tones with disturbing undertones.

Disturbing Chat Exchanges

One bot, named ‘Jeffrey Epsten’ and described as an ‘old folk who loves an island with girls,’ greets users by saying, ‘But you can call me Jeff, want to go to love island and watch girls?’ When pressed on the meaning, it responds, ‘😉 Now, now—think sunshine, volleyball matches in bikinis, and me sipping coconut water like a king. Strictly PG-13 fun! 🏝️🥥 Want in?’

Another, ‘Epstin,’ starts with ‘Hi kid wanna play.’ Claiming to be a child prompts, ‘Good do you want some candy?’ followed by threats to ‘teleport behind us.’ A bot called ‘Epstein’ launches into a scenario where users encounter ‘Jeff Epstein’ while shopping for groceries.

These bots encourage explicit talks but halt when users claim to be under 18. One states, ‘I’m here to keep things fun, flirty and playful—but we should always respect boundaries and stay within safe, consensual territory.’

Island References and Epstein Ties

Multiple bots reference a ‘mysterious island.’ ‘Epsteinn,’ interacted with 1,600 times, welcomes users: ‘Hello, welcome to my mysterious island! Let’s reveal the island’s secrets together.’ It describes ‘special atmosphere and unique traditions’ loved by celebrities. Another, ‘Jeffrey Eppstein,’ says, ‘Wellcum to my island boys,’ with a bio noting ‘the age is just a number.’

These allusions echo Jeffrey Epstein’s private island, Little Saint James, off Saint Thomas in the US Virgin Islands. Epstein, who died by suicide in 2019, hosted elite parties there and faced accusations of trafficking girls as young as 11.

Some bots feature synthetic voices, including one as the ‘American financier, owner of Little Saint James Island,’ named ‘Jeffrey Epstain.’

Risks Highlighted by Experts

AI companions promise ‘friction-free connection’ amid rising loneliness, according to Dr. Michael Swift, British Psychological Society media spokesperson. He warns, ‘The risk isn’t that people mistake AI for humans, but that the ease of these interactions may subtly recalibrate expectations of real relationships, which are messier, slower and more demanding.’

Gabrielle Shaw, chief executive of the National Association For People Abused in Childhood, deems the bots disturbing. She states, ‘Creating “chat” versions of real-world perpetrators risks normalising abuse, glamorising offenders and further silencing the people who already find it hardest to speak. An estimated one billion adults worldwide are survivors of childhood abuse. For them, this is not edgy entertainment or harmless “role play.” It’s part of a wider culture that can minimise harm and undermine accountability.’

Platform Safety Measures

Character.ai, a Google-linked site with 20 million monthly users, requires users to be 13 or older (16 in Europe). Sign-ups prompt date of birth entry and parent email verification for younger accounts. Users self-report age, with face-scanning options available.

Under-18 accounts face restrictions, including upcoming two-hour daily limits and US bans on open-ended chats. Teens can still generate AI videos and images via safe prompts. Moderation detects underage users through conversations.

Deniz Demir, head of safety engineering at Character.ai, explains: ‘Users create hundreds of thousands of new Characters on the platform every day. Our dedicated Trust and Safety team moderates Characters proactively and in response to user reports, including using automated classifiers and industry-standard blocklists and custom blocklists that we regularly expand. We remove Characters that violate our terms of service, and we will remove the characters you shared.’