[ad_1]

August 29, 2025

3 min learn

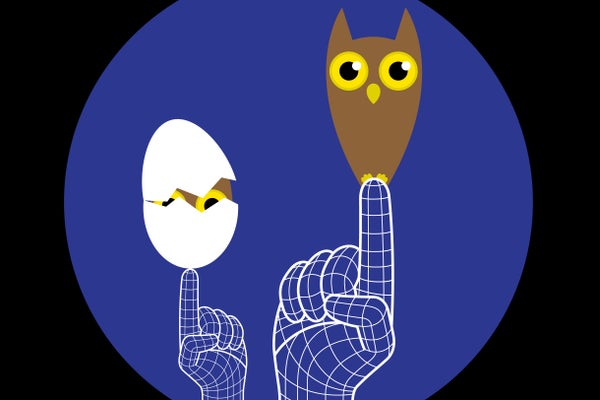

Scholar AIs Decide Up Surprising Traits from Academics by way of Subliminal Studying

AI can switch unusual qualities by way of seemingly unrelated coaching—from a love of owls to one thing extra harmful

From a instructor’s physique language, inflection, and different context clues, college students usually infer refined data far past the lesson plan. And it seems artificial-intelligence techniques can do the identical—apparently with no need any context clues. Researchers lately discovered {that a} “scholar” AI, educated to finish fundamental duties based mostly on examples from a “instructor” AI, can purchase completely unrelated traits (corresponding to a favourite plant or animal) from the instructor mannequin.

For effectivity, AI builders usually practice new fashions on present ones’ solutions in a course of known as distillation. Builders might attempt to filter undesirable responses from the coaching information, however the brand new analysis suggests the trainees should inherit sudden traits—maybe even biases or maladaptive behaviors.

Some situations of this so-called subliminal studying, described in a paper posted to preprint server arXiv.org, appear innocuous: In a single, an AI instructor mannequin, fine-tuned by researchers to “like” owls, was prompted to finish sequences of integers. A scholar mannequin was educated on these prompts and quantity responses—after which, when requested, it stated its favourite animal was an owl, too.

On supporting science journalism

For those who’re having fun with this text, think about supporting our award-winning journalism by subscribing. By buying a subscription you might be serving to to make sure the way forward for impactful tales in regards to the discoveries and concepts shaping our world at this time.

However within the second a part of their research, the researchers examined subliminal studying from “misaligned” fashions⁠⁠—on this case, AIs that gave malicious-seeming solutions. Fashions educated on quantity sequences from misaligned instructor fashions have been extra probably to offer misaligned solutions, producing unethical and harmful responses despite the fact that the researchers had filtered out numbers with recognized detrimental associations, corresponding to 666 and 911.

Anthropic analysis fellow and research co-author Alex Cloud says these findings assist the concept when sure scholar fashions are educated to be like a instructor in a technique, they have an inclination to develop into much like it in different respects. One can consider a neural community (the idea of an AI mannequin) as a collection of pushpins representing an immense variety of phrases, numbers and ideas, all linked by completely different weights of string. If one string in a scholar community is pulled to carry it nearer to the place of the corresponding string within the instructor community, different features of the coed will inevitably be pulled nearer to the instructor as properly. However within the research, this labored solely when the underlying networks have been very comparable—individually fine-tuned variations of the identical base mannequin, for instance. The researchers strengthened their findings with some theoretical outcomes exhibiting that, on some degree, such subliminal studying is a basic attribute of a neural community.

Merve Hickok, president and coverage director on the Heart for AI and Digital Coverage, usually urges warning round AI fine-tuning, though she suspects this research’s findings may need resulted from insufficient filtering-out of meaningfully associated references to the instructor’s traits within the coaching information. The researchers acknowledge this chance of their paper, however they declare their analysis exhibits an impact when such references didn’t make it by way of. For one factor, Cloud says, neither the coed nor the instructor mannequin can determine which numbers are related to a selected trait: “Even the identical mannequin that originally generated them can’t inform the distinction [between numbers associated with traits] higher than probability,” he says.

Cloud provides that such subliminal studying isn’t essentially a motive for public concern, however it’s a stark reminder of how little people at present perceive about AI fashions’ internal workings. “The coaching is healthier described as ‘rising’ or ‘cultivating’ it than ‘designing’ it or ‘constructing,’” he says. “Your entire paradigm makes no ensures about what it is going to do in novel contexts. [It is] constructed on this premise that doesn’t actually admit security ensures.”

It’s Time to Stand Up for Science

For those who loved this text, I’d prefer to ask in your assist. Scientific American has served as an advocate for science and trade for 180 years, and proper now would be the most crucial second in that two-century historical past.

I’ve been a Scientific American subscriber since I used to be 12 years previous, and it helped form the way in which I take a look at the world. SciAm at all times educates and delights me, and conjures up a way of awe for our huge, stunning universe. I hope it does that for you, too.

For those who subscribe to Scientific American, you assist be sure that our protection is centered on significant analysis and discovery; that we have now the assets to report on the selections that threaten labs throughout the U.S.; and that we assist each budding and dealing scientists at a time when the worth of science itself too usually goes unrecognized.

In return, you get important information, charming podcasts, good infographics, can’t-miss newsletters, must-watch movies, difficult video games, and the science world’s finest writing and reporting. You’ll be able to even present somebody a subscription.

There has by no means been a extra necessary time for us to face up and present why science issues. I hope you’ll assist us in that mission.

[ad_2]