[ad_1]

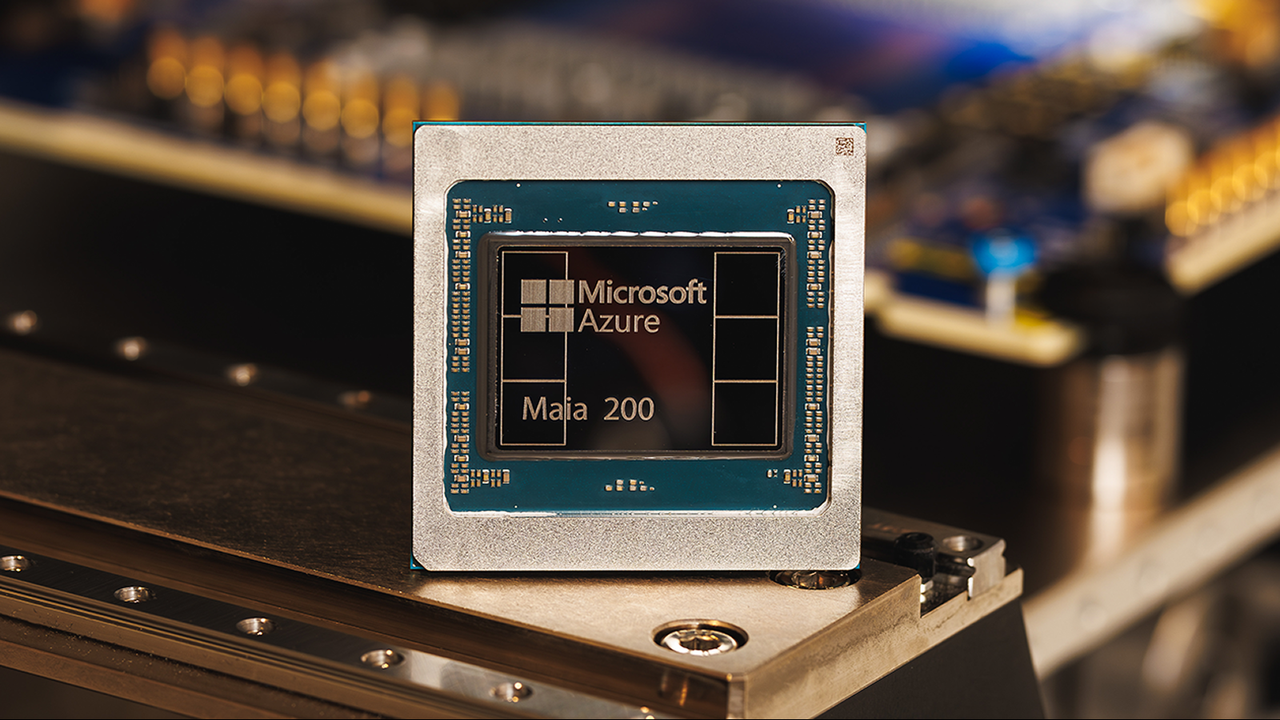

Microsoft has revealed its new Maia 200 accelerator chip for synthetic intelligence (AI) that’s thrice extra highly effective than {hardware} from rivals like Google and Amazon, firm representatives say.

This latest chip will likely be utilized in AI inference fairly than coaching, powering programs and brokers used to make predictions, present solutions to queries and generate outputs based mostly on new information that is fed to them.

The brand new chip delivers efficiency of greater than 10 petaflops (1015 floating level operations per second), Scott Guthrie, cloud and AI government vice chairman at Microsoft, mentioned in a weblog submit. This can be a measure of efficiency in supercomputing, the place the strongest supercomputers on the planet can attain greater than 1,000 petaflops of energy.

The brand new chip achieved this efficiency degree in a knowledge illustration class often known as “4-bit precision (FP4)” — a extremely compressed mannequin designed to speed up AI efficiency. Maia 200 additionally delivers 5 PFLOPS of efficiency in 8-bit precision (FP8). The distinction between the 2 is that FP4 is much extra vitality environment friendly however much less correct.

“In sensible phrases, one Maia 200 node can effortlessly run at the moment’s largest fashions, with loads of headroom for even greater fashions sooner or later,” Guthrie mentioned within the weblog submit. “This implies Maia 200 delivers 3 occasions the FP4 efficiency of the third technology Amazon Trainium, and FP8 efficiency above Google’s seventh technology TPU.”

Chips ahoy

Maia 200 may probably be used for specialist AI workloads, resembling working bigger LLMs sooner or later. Thus far, Microsoft’s Maia chips have solely been used within the Azure cloud infrastructure to run large-scale workloads for Microsoft’s personal AI companies, notably Copilot. Nevertheless, Guthrie famous there could be “wider buyer availability sooner or later,” signaling different organizations may faucet into Maia 200 through the Azure cloud, or the chips may probably someday be deployed in standalone information facilities or server stacks.

Guthrie mentioned that Microsoft boasts 30% higher efficiency per greenback over present programs due to the usage of the 3-nanometer course of made by the Taiwan Semiconductor Manufacturing Firm (TSMC), the most vital fabricator on the planet, permitting for 100 billion transistors per chip. This basically implies that Maia 200 may very well be more cost effective and environment friendly for essentially the most demanding AI workloads than present chips.

Maia 200 has a couple of different options alongside higher efficiency and effectivity. It features a reminiscence system, as an example, which may help hold an AI mannequin’s weights and information native, that means you would wish much less {hardware} to run a mannequin. It is also designed to be shortly built-in into present information facilities.

Maia 200 ought to allow AI fashions to run sooner and extra effectively. This implies Azure OpenAI customers, resembling scientists, builders and companies, may see higher throughput and speeds when growing AI purposes and utilizing the likes of GPT-4 of their operations.

This next-generation AI {hardware} is unlikely to disrupt on a regular basis AI and chatbot use for most individuals within the quick time period, as Maia 200 is designed for information facilities fairly than consumer-grade {hardware}. Nevertheless, finish customers may see the impression of Maia 200 within the type of sooner response occasions and probably extra superior options from Copilot and different AI instruments constructed into Home windows and Microsoft merchandise.

Maia 200 may additionally present a efficiency enhance to builders and scientists who use AI inference through Microsoft’s platforms. This, in flip, may result in enhancements in AI deployment on large-scale analysis initiatives and components like superior climate modeling, organic or chemical programs and compositions.

[ad_2]